The DeepRain project was launched to develop new approaches to combine modern machine learning methods with high performance IT systems for data processing and dissemination in order to produce high-resolution spatial maps of precipitation over Germany. The foundation of this project was the multi-year archive of ensemble model forecasts from the numerical weather model COSMO of the German Weather Service (DWD). Six trans-disciplinary research institutions worked together in DeepRain to develop an end-to-end processing chain which could potentially be used in the future operational weather forecasting context. The project proposal had identified several challenges which had to be overcome in this regard. Next to the technical challenges in establishing a novel data fusion of rather diverse data sets (numerical model data, radar data, ground-based station observations), building scalable machine learning solutions and optimising the performance of data processing and machine learning, there were various scientific challenges related to the small local-scale structures of precipitation events, difficulties with finding robust evaluation methods for precipitation forecasts and non-gaussian precipitation statistics combined with highly imbalanced data sets. When DeepRain started, the application of machine learning to weather and climate data was still very new and there were hardly any publications or software codes available to build upon. DeepRain thus pioneered the use of modern deep learning models in the domain of weather forecasting. Concurrently, one could observe an exponential increase in the number of publications in this new field over the past three years; very often these were studies conducted in North America or China. Global players like Google, Amazon, NVidia, or Microsoft have in the meantime established groups of scientists and engineers to advance research on “Weather AI” and develop (marketable) weather and climate applications with deep learning. Therefore, the DeepRain project was very timely as it established a baseline for machine learning in weather and climate in Germany and it allowed the consortium to explore the potential of deep learning in context with the gigantic data processing that is needed and to keep pace with the international developments in this rapidly growing field of research.

While DeepRain could not complete the final deliverable, i.e. the construction of a prototype end-to-end workflow for high-resolution precipitation forecasts based on deep learning, all of the related research questions have been answered and all the necessary building blocks for such a workflow have been developed. In particular, modern datacube technology has been used successfully for establishing four to six-dimensional atmospheric simulation datacubes based on DWD data available for extraction and analytics.

In addition to the anticipated challenges described above there were severe issues materialising during the project: 1) a large scale data loss due to hardware failures in spring 2021, 2) the Covid-19 pandemic from March 2020 until now, and 3) difficulties to find highlyskilled personnel – especially in times when most work had to be done in a home office setting.

The main accomplishments of DeepRain are:

- Petabyte-scale data transfer of archived COSMO-DE EPS forecasts from tape drives of DWD and of RADKLIM dataset from OpenData-server to the file system JUST at JSC/FZ Jülich, organisation and cleaning of these data and granting data access to all project partners,

- Parallelized processing of COSMO-EPS and RADKLIM data (ensemble statistics, remapping for data fusion and for ingestion to rasdaman),

- Implementation of Rasdaman datacube array database servers at FZ Jülich and ingestion of several TBytes of weather data,

- Establishing links from the Jülich Rasdaman servers to the EarthServer datacube federation,

- Further developments of Rasdaman to accelerate data ingestions and retrieval, define new user-defined functions for analysis of topographic data, define a new coordinate reference system for rotated pole coordinates, and prepare for interfacing machine learning workflows,

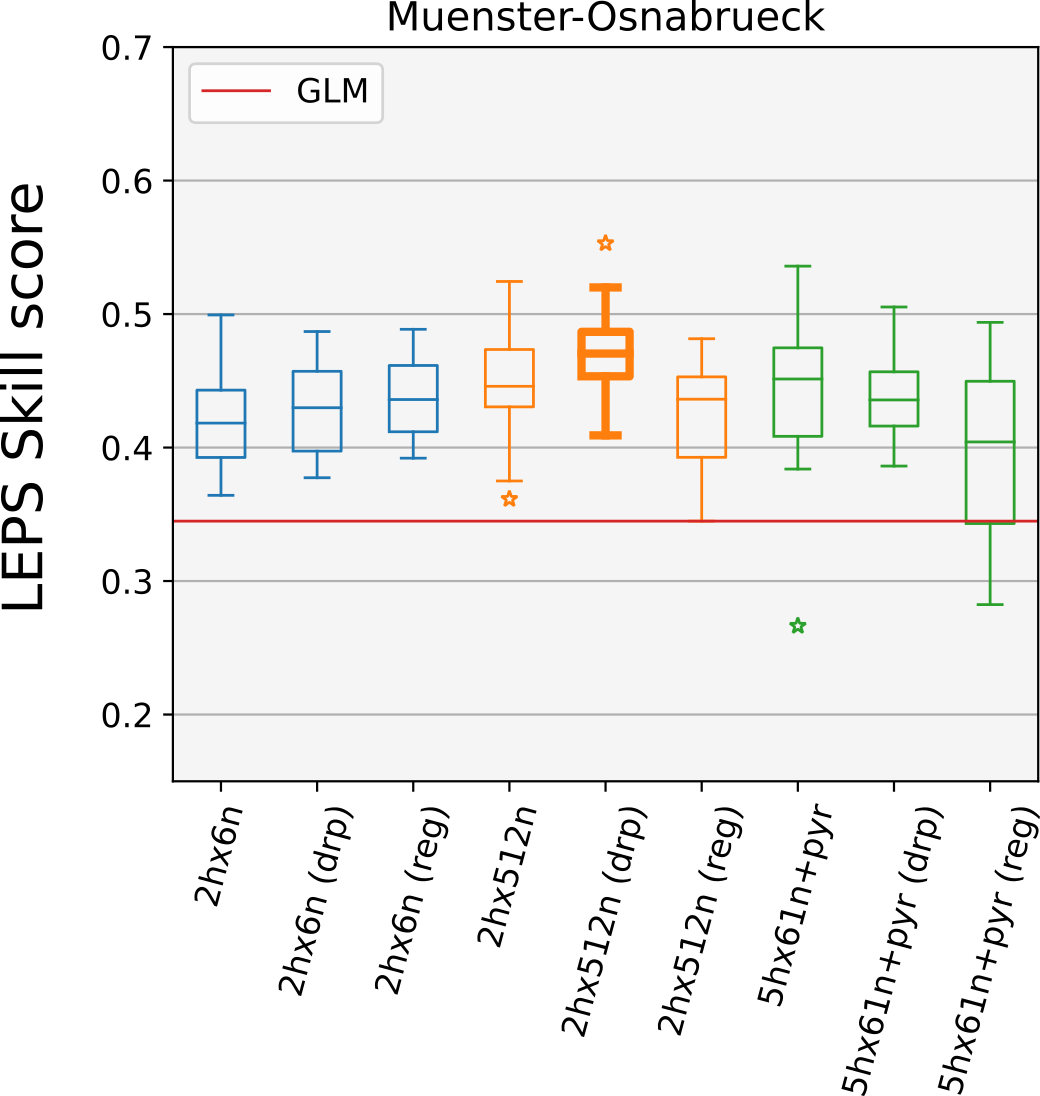

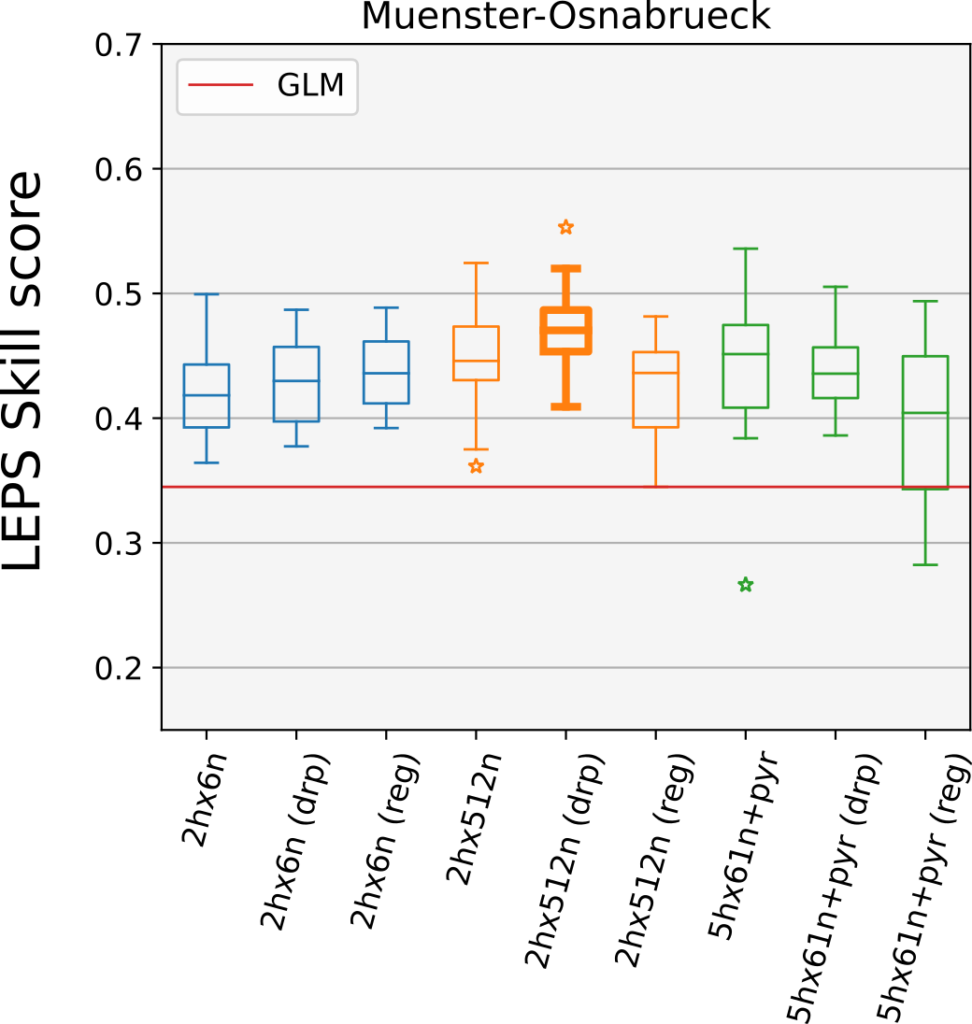

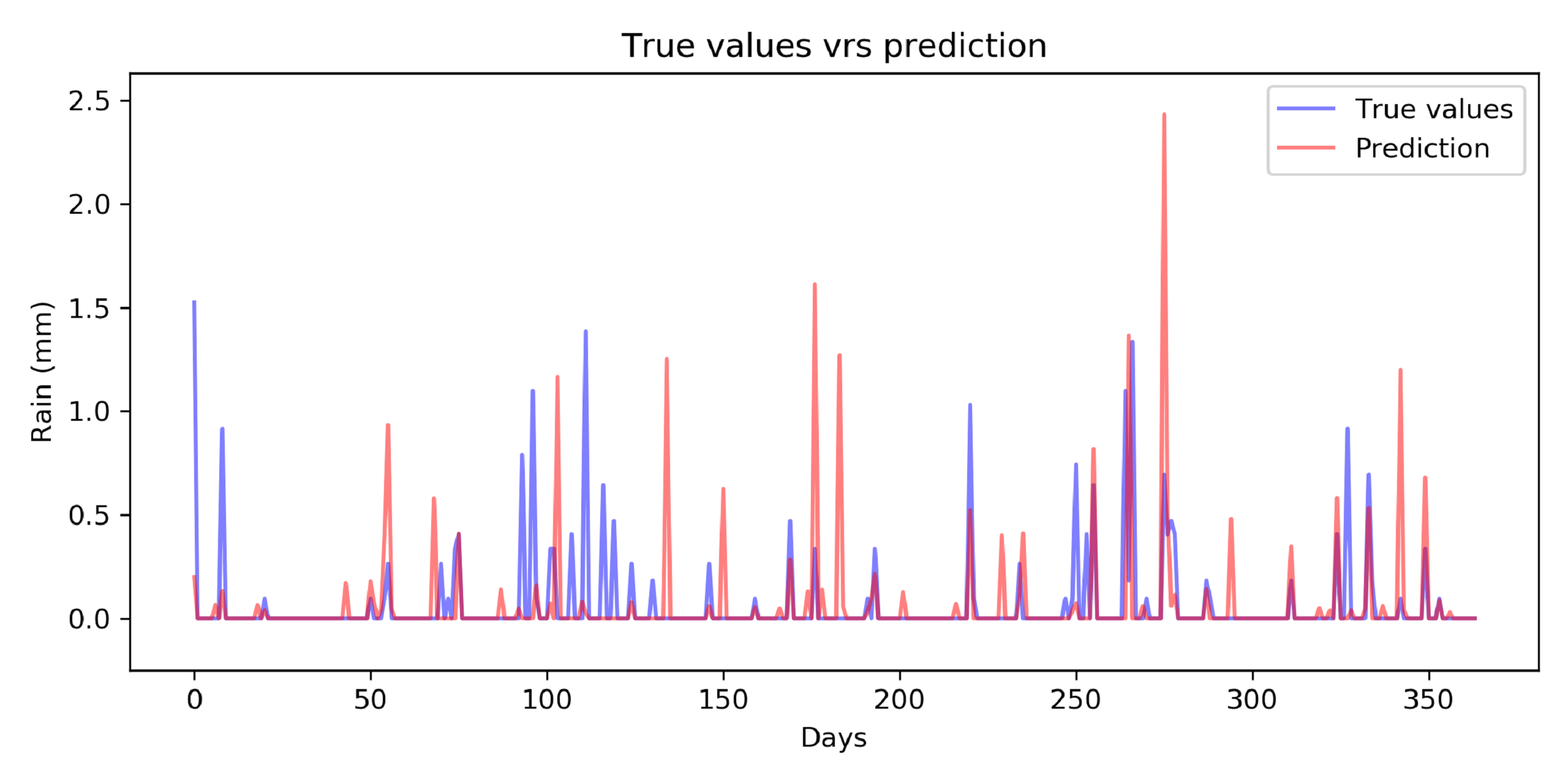

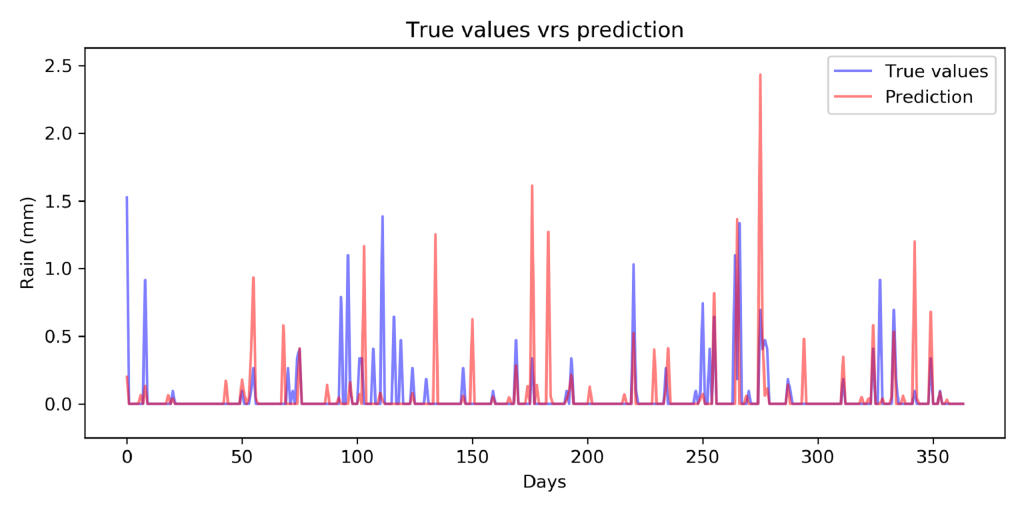

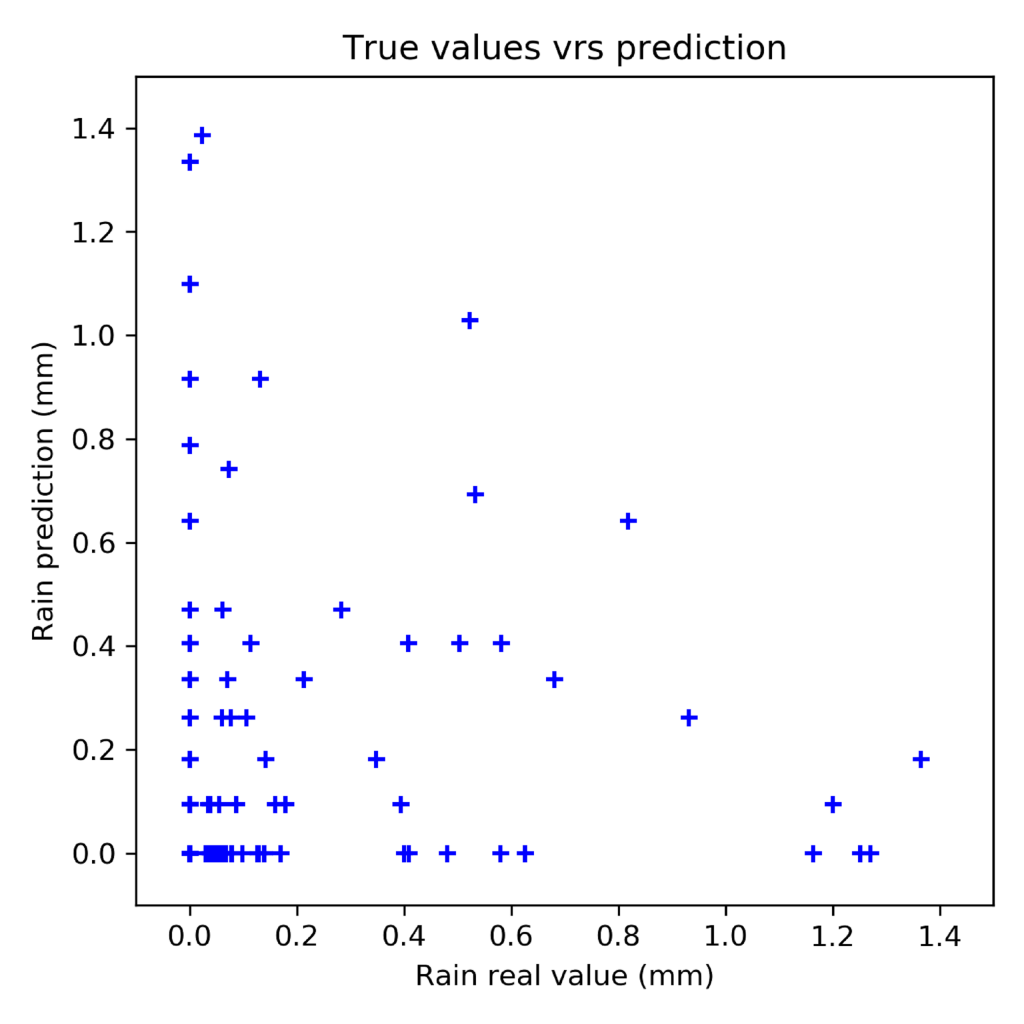

- Development of statistical downscaling techniques and machine learning models to:

- Generate dichotomous and quantitative precipitation forecasts at station sites,

- Generate area forecasts at the RADKLIM radar data resolution,

- Exploration of new verification statistics based on partial correlations and regression boosting.

In this report, we provide a detailed overview on the work and the achievements within the DeepRain project. This report is organised in five sections: In Section 1, we present the deliverable plan from the project proposal and provide information on the delivery state of each task separately to allow for a compact comparison between the project plan and its output. In Section 2, we then outline in detail the work carried out in the project for each work package individually. The expected outcome from the project achievements as well as possible future benefits are discussed in Section 3. In Section 4, we give a general overview on the progress made in the research fields related to DeepRain; specifically, these are:

machine learning for precipitation forecasting, precipitation forecast evaluation methods, big data handling and FAIR data practices. Finally, Section 5 lists all the journal publications,data sets and software packages and planned submissions resulting from the DeepRain project.

Section 6 contains the list of references.

Link to full final report: https://hdl.handle.net/2128/33144